#BASE64 ENCODING SIZE INCREASE CODE#

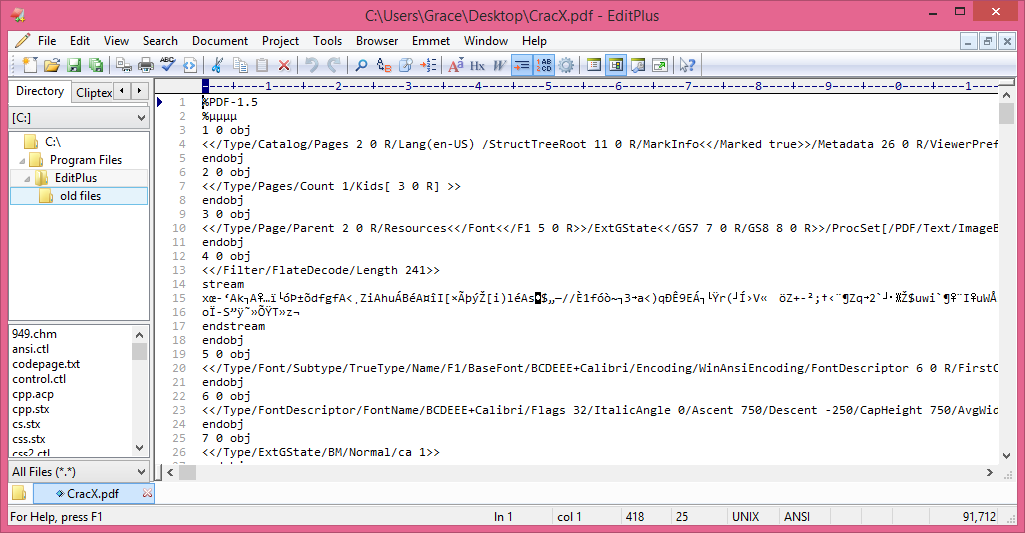

The first thing this code does is to stack allocate two byte arrays. This method is defined as an extension method on the GUID type for ease of use. This is the complete code, don’t worry, we’ll step through it line-by-line to understand what it does. Spoiler alert, this has fewer allocations! We’ll see the results soon. Let’s take a look at the final version of the code that I ended up with (at least for now). Mystery solved! 192 B seemed to be the smallest allocation size I noted during my benchmarking, with the worst case being 352 B. This is an optimisation which avoids new string allocations unless there’s a need for them. Looking at the corefx source code on GitHub for the Replace method, I noticed that indeed, if the character being replaced is not found in the string, then the original string is returned, unchanged. There’s no guarantee that I’ll always have unsafe characters to replace in the base64 encoded string.

My first conclusion when anything unexpected occurs is that I’ve screed up somewhere! In this case, I realised that it might perhaps be the behaviour of the Replace method. This initially looked like a problem with how I was exercising the code or running my benchmark. Curiously, on some occasions, I was getting different allocation counts. With the benchmark results in hand, my goal was to improve upon the 192 bytes allocated per iteration.Īs an aside: I ran the benchmark a few times. In this case, I directly exercise the original code as a baseline benchmark.Īfter running the benchmark, the summary results are as follows: If you want to learn more about benchmarking, you can read my previous benchmarking blog post.

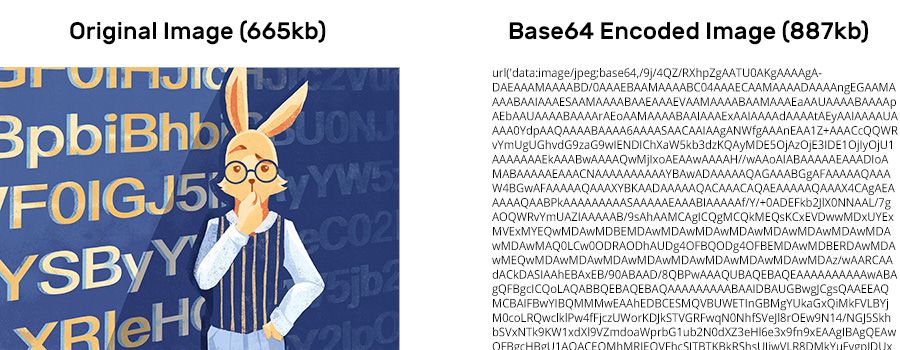

Therefore, I set about writing a new approach to base64 encode GUIDs, which should hopefully be faster, but most importantly reduce the number of bytes allocated.īefore starting work, I took the first important step, which was to benchmark the existing code. This service needs to handle 100’s of requests per second if we’re to avoid heavy scaling. Replace, for example, causes a new string to be allocated when replacing characters because strings are immutable. For general cases, this might be okay, but as we take our service from a proof of concept stage to an alpha/beta state, we know the request volumes will increase. There’s the creation of a byte array and the calls to Replace that we should be wary of. This code works and it does the job, but it allocates a fair amount in the process. When based64 encoding a GUID, we’re guaranteed to get two padding characters ‘=’ at the end of the string. Finally, it removes any trailing padding characters.

Since we need our identifiers to be safe for use in URL query strings, this code also replaces any unsafe characters using the Replace method. This array is passed to the Convert.ToBase64String method which returns an encoded string. This code first gets a byte array from the GUID. This code is borrowed from this example posted in a popular StackOverflow question about base64 encoding GUIDs.

Benchmarking the Original Codeĭuring the initial proof of concept stage, I’d used some quick and dirty code to get the base64 encoded string… Convert.ToBase64String(_guid.ToByteArray()).Replace("/", "-").Replace("+", "_").Replace("=", "") A design goal here was to limit the increase of the document storage size by reducing the length of the unique identifier strings that will be included in the document. A recent new requirement is to generate and store four additional identifiers. We’re doing this at scale for about 18 million new documents every day. Scenarioįor a service that I’m currently working on, in a few places we need to generate unique identifiers which are included on some documents we index to Elasticsearch. NET Core performance focused API changes. It’s been a little while since my last high-performance post, but my use of the techniques and features continues! In this post, I want to present a more practical example which I hope will help to illustrate a real-world use case for some of the new.